Artificial intelligence

ChatGPT makes its play for doctors

OpenAI on Wednesday launched ChatGPT for Clinicians, a free version of the chatbot that can be used used for searching medical evidence, writing letters to insurance companies, and summarizing patient records. The service is open only to clinicians who have a National Provider Identifier number. OpenAI offers to sign agreements that make it possible to use the product with patient data in compliance with the health privacy law, HIPAA.

OpenAI already has ChatGPT offerings for health care organizations but says the new tool is "designed for individual clinicians whose hospitals or clinics don’t yet offer a centralized AI tool." Features specific to this product include citations to medical literature.

With the launch, the company is offering a service that directly competes with free offerings from Doximity and OpenEvidence, which have exploded in popularity. OpenEvidence's data suggests hundreds of thousands of clinicians are regularly using its service.

NPI verification has been a controversial aspect of these tools. Companies argue it keeps powerful medical search out of reach of ordinary people who don't need it and might misinterpret findings. It also confirms the identity of clinicians who are targeted with advertising. Will OpenAI layer on advertising here? We'll see.

telehealth

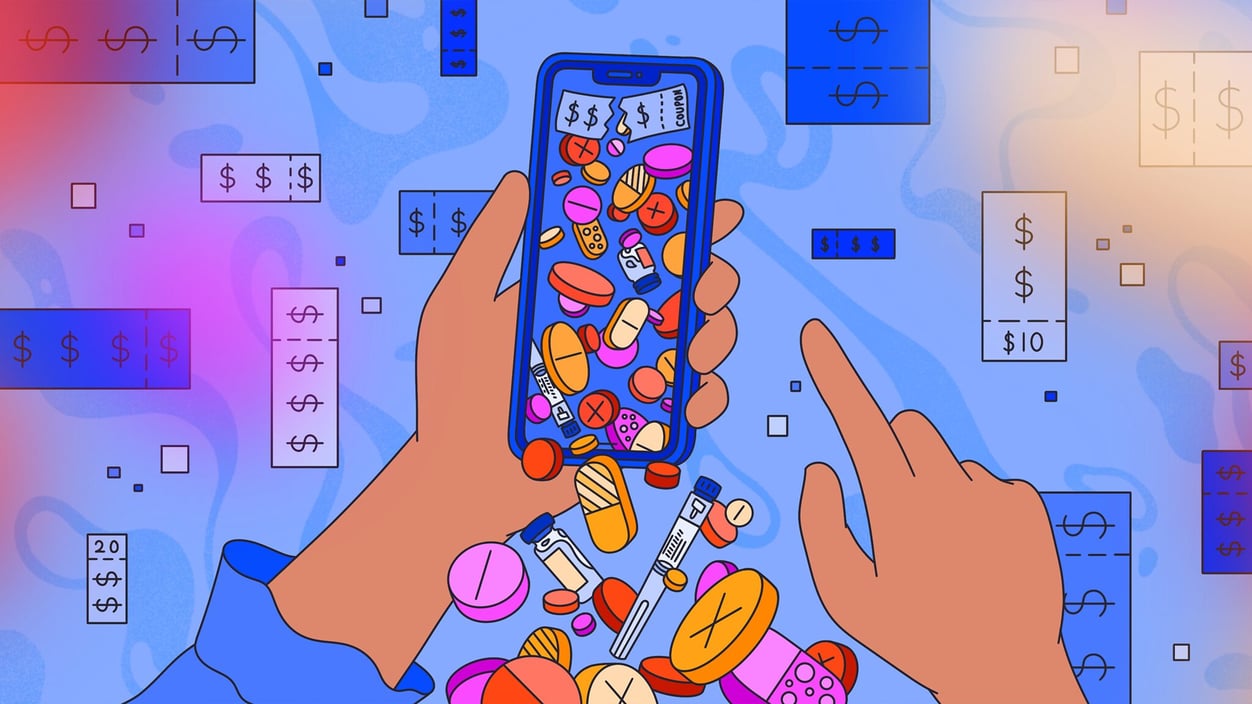

Bargain telehealth for drug access

Patients getting drug prescriptions over telehealth is now commonplace. The practice has drawn the scrutiny of health policy experts, lawmakers, and regulators who worry that drugs are being marketed inaccurately; that patients are receiving subpar care; and that arrangements between drugmakers and telehealth companies may violate anti-kickback statutes that forbid incentives that might induce the health care behavior of patients or providers.

So what, then, do we make of a $10 coupon code that lures a potential patient to a telehealth visit to explore the potential of Addyi, a drug for women with low libido? If the drugmaker is subsidizing the visit, is it a kickback? Do the providers at telehealth companies really explore all the possible treatment options or just rubber stamp a pharma partner’s drug? In a new story, Katie Palmer explores the pharmaceutical industry’s growing use of bargain basement telehealth visits and what it portends for the future of drug marketing.

Read more here

hospitals

WISeR model causes care delays, hospital report says

Patients in Washington are reportedly waiting as much as four times longer to complete procedures covered by an experimental Medicare model that is using artificial intelligence to review the appropriateness of certain health care services. The data from the Washington State Hospital Association was published by Sen. Maria Cantwell (D-Wash) who question Health Secretary Robert F. Kennedy Jr. about the program during a Senate Finance Committee hearing on Wednesday.

Prior-authorization is already used by insurers who run Medicare Advantage plans, and the Wasteful and Inappropriate Service Reduction model from the CMS innovation center is testing its use in traditional Medicare. The goal is to cut fraud and unnecessary spending, but the plan has faced opposition since it was announced last year. As Tara Bannow writes, the report is the first to document alleged patient harms tied to the program.

Read more here

Policy

AMA urges mental health chatbot and wellness product crackdown

In a series of letters, CEO of American Medical Association, John Whyte, urged lawmakers to pursue safeguards around the use of chatbots in mental health care. Many states have already passed local laws governing the use of chatbots, and pressure from the doctor lobby may turn up the heat for national rulemaking to establish uniform standards.

Most provocatively, Whyte urges Congress to direct the Food and Drug Administration to clarify its stance on what kinds of health AIs count as general wellness products versus medical devices. Specifically, AMA wants to close the loophole that allows companies to dodge regulators by claiming their product is not intended for medical uses. “Determinations of regulatory status should be based on the function of the technology and should not be solely based on marketing claims,” Whyte argues. “Simple disclaimers included by chatbots should not be considered sufficient to escape regulatory review.”

Another provocative recommendation urges rules that discourage the use of advertising in mental health chatbots. AMA also wants Congress to mandate that bots be able to identify and respond to risks of self-harm and suicide and that developers engage in post-deployment safety monitoring with reporting requirements.

Many of the other asks we’ve heard before in different ways, such as requirements that bots clearly disclose themselves to be AI and not present themselves to be licensed professionals.

No comments